Fetching article…

Rise of the Machines: Artificial Intelligence Dominance in This Era

AI takes center stage, transforming industries. Is human dominance at risk?

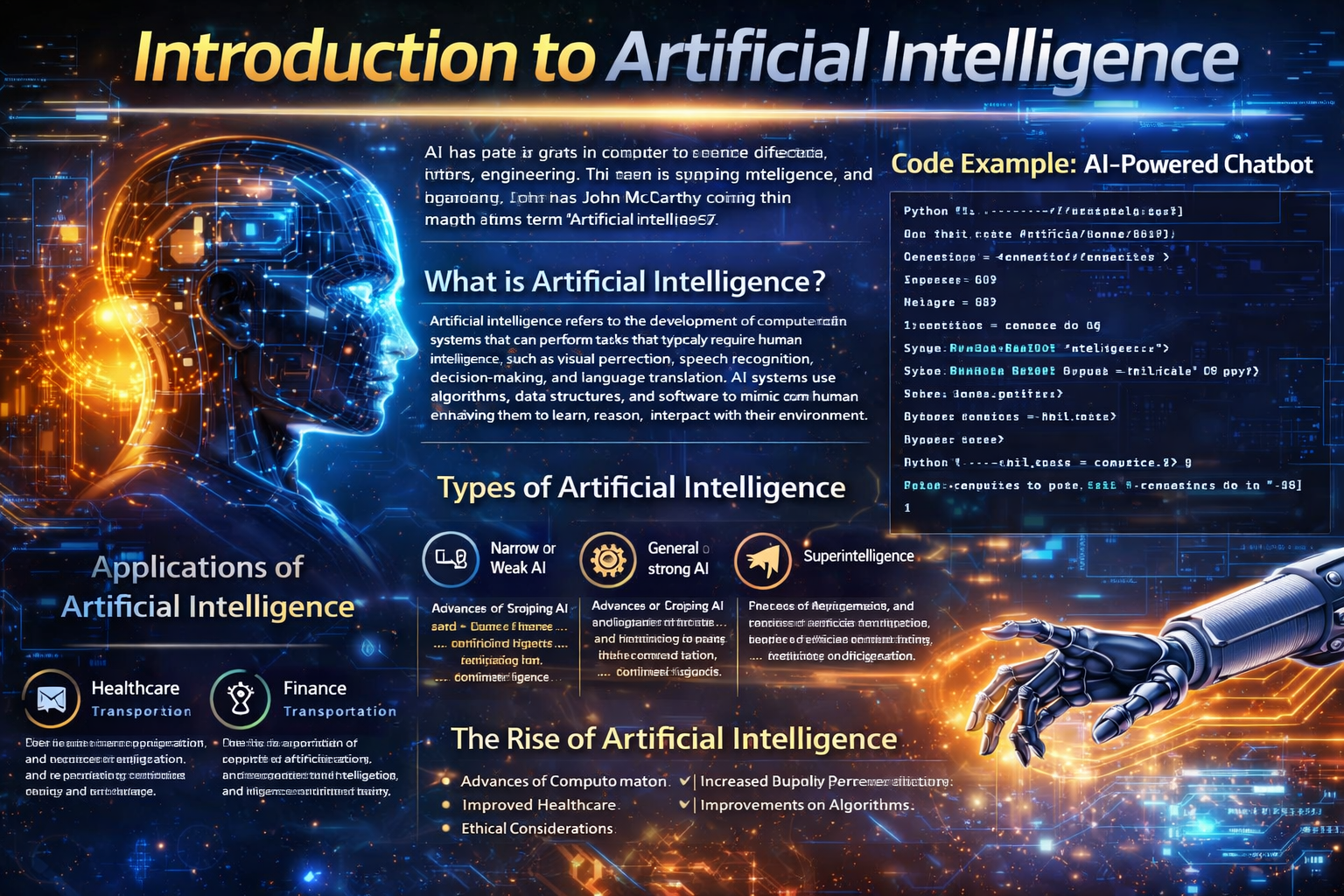

Introduction to Artificial Intelligence

Artificial intelligence (AI) has been a topic of interest for decades, with its roots in computer science, mathematics, and engineering. The term 'artificial intelligence' was coined in 1956 by John McCarthy, a computer scientist and cognitive scientist. Since then, AI has evolved significantly, transforming from a mere concept to a reality that is changing the world.

What is Artificial Intelligence?

Artificial intelligence refers to the development of computer systems that can perform tasks that typically require human intelligence, such as visual perception, speech recognition, decision-making, and language translation. AI systems use algorithms, data structures, and software to mimic human cognition, enabling them to learn, reason, and interact with their environment.

Types of Artificial Intelligence

There are several types of AI, including:

- ►Narrow or Weak AI: Designed to perform a specific task, such as facial recognition, language translation, or playing chess.

- ►General or Strong AI: A hypothetical AI system that possesses the ability to understand, learn, and apply knowledge across a wide range of tasks, similar to human intelligence.

- ►Superintelligence: An AI system that significantly surpasses the cognitive abilities of humans, potentially leading to an intelligence explosion.

Applications of Artificial Intelligence

AI has numerous applications across various industries, including:

- ►Healthcare: AI-powered systems can analyze medical images, diagnose diseases, and develop personalized treatment plans.

- ►Finance: AI can detect anomalies in financial transactions, predict market trends, and optimize investment portfolios.

- ►Transportation: AI-powered self-driving cars and drones can revolutionize the way we travel and transport goods.

- ►Customer Service: AI-powered chatbots can provide 24/7 customer support, answering queries and resolving issues.

The Rise of Artificial Intelligence

The rise of AI can be attributed to several factors, including:

- ►Advances in Computing Power: The increasing processing power of computers has enabled AI systems to handle complex tasks and large datasets.

- ►Availability of Data: The exponential growth of data has provided AI systems with the necessary fuel to learn and improve.

- ►Improvements in Algorithms: The development of new algorithms and techniques, such as deep learning, has significantly enhanced the capabilities of AI systems.

Code Example: AI-Powered Chatbot

import nltk

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()import json import pickle import numpy as np

from keras.models import Sequential from keras.layers import Dense, Activation, Dropout from keras.optimizers import SGD from keras.models import load_model

import random

words = [] classes = [] documents = [] ignore_words = ['?', '!'] data_file = open('intents.json').read() intents = json.loads(data_file)

for intent in intents['intents']: for pattern in intent['patterns']: # tokenize each word in the sentence w = nltk.word_tokenize(pattern) words.extend(w) # add documents in the corpus documents.append((w, intent['tag'])) # add to our classes list if intent['tag'] not in classes: classes.append(intent['tag'])

remove ignore words

words = [lemmatizer.lemmatize(w.lower()) for w in words if w not in ignore_words] words = sorted(list(set(words)))

remove duplicates

classes = sorted(list(set(classes)))

pickle.dump(words, open('words.pkl', 'wb')) pickle.dump(classes, open('classes.pkl', 'wb'))

create our training data

training = [] output_empty = [0] * len(classes) for doc in documents: # initialize our bag of words bag = [] # list of tokenized words for the pattern word_patterns = doc[0] # lemmatize each word - create base word, in attempt to represent related words word_patterns = [lemmatizer.lemmatize(word.lower()) for word in word_patterns] # create our bag of words array for word in words: bag.append(1) if word in word_patterns else bag.append(0)

# output is a '0' for each tag and '1' for current tag (for each pattern)

output_row = list(output_empty)

output_row[classes.index(doc[1])] = 1

training.append([bag, output_row])

shuffle our features and turn into np.array

random.shuffle(training) training = np.array(training)

create train and test lists

train_x = list(training[:,0]) train_y = list(training[:,1]) print('Training data created')

create model - 3 layers. First layer 128 neurons, second layer 64 neurons and 3rd output layer containing number of neurons

equal to number of intents to predict output intent with softmax

model = Sequential() model.add(Dense(128, input_shape=(len(train_x[0]),), activation='relu')) model.add(Dropout(0.5)) model.add(Dense(64, activation='relu')) model.add(Dropout(0.5)) model.add(Dense(len(train_y[0]), activation='softmax'))

Compile model. Stochastic gradient descent with Nesterov accelerated gradient gives good results for this model

sgd = SGD(lr=0.01, decay=1e-6, momentum=0.9, nesterov=True) model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])

hist = model.fit(np.array(train_x), np.array(train_y), epochs=200, batch_size=5, verbose=1) model.save('chatbot_model.h5', hist)

The Future of Artificial Intelligence

As AI continues to advance, we can expect significant changes in various aspects of our lives. Some potential developments include:

- ►Increased Automation: AI-powered robots and machines may replace human workers in industries such as manufacturing, transportation, and customer service.

- ►Improved Healthcare: AI can help develop personalized medicine, predict patient outcomes, and optimize treatment plans.

- ►Enhanced Security: AI-powered systems can detect and respond to cyber threats, protecting sensitive information and preventing data breaches.

Challenges and Concerns

While AI has the potential to bring numerous benefits, there are also challenges and concerns that need to be addressed, including:

- ►Job Displacement: The increasing use of AI-powered automation may lead to job displacement, particularly in industries where tasks are repetitive or can be easily automated.

- ►Bias and Discrimination: AI systems can perpetuate existing biases and discrimination if they are trained on biased data or designed with a particular worldview.

- ►Ethics and Accountability: As AI systems become more autonomous, there is a need to establish clear guidelines and regulations to ensure that they are used responsibly and ethically.

Conclusion

Artificial intelligence has come a long way since its inception, and its impact on our lives is undeniable. As AI continues to evolve, it is essential to address the challenges and concerns associated with its development and deployment. By doing so, we can ensure that AI is used to benefit humanity and create a better future for all.

Was this helpful?

Share this post